Databricks notebook execution contexts

When you attach a notebook to a cluster, Databricks creates an execution context. An execution context contains the state for a REPL environment for each supported programming language: Python, R, Scala, and SQL. When you run a cell in a notebook, the command is dispatched to the appropriate language REPL environment and run.

You can also use the command execution API to create an execution context and send a command to run in the execution context. Similarly, the command is dispatched to the language REPL environment and run.

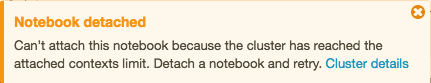

A cluster has a maximum number of execution contexts (145). Once the number of execution contexts has reached this threshold, you cannot attach a notebook to the cluster or create a new execution context.

Idle execution contexts

An execution context is considered idle when the last completed execution occurred past a set idle threshold. Last completed execution is the last time the notebook completed execution of commands. The idle threshold is the amount of time that must pass between the last completed execution and any attempt to automatically detach the notebook.

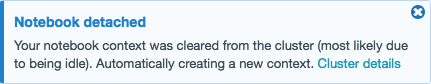

When a cluster has reached the maximum context limit, Databricks removes (evicts) idle execution contexts (starting with the least recently used) as needed. Even when a context is removed, the notebook using the context is still attached to the cluster and appears in the cluster’s notebook list. Streaming notebooks are considered actively running, and their context is never evicted until their execution has been stopped. If an idle context is evicted, the UI displays a message indicating that the notebook using the context was detached due to being idle.

If you attempt to attach a notebook to cluster that has maximum number of execution contexts and there are no idle contexts (or if auto-eviction is disabled), the UI displays a message saying that the current maximum execution contexts threshold has been reached and the notebook will remain in the detached state.

If you fork a process, an idle execution context is still considered idle once execution of the request that forked the process returns. Forking separate processes is not recommended with Spark.

Configure context auto-eviction

Auto-eviction is enabled by default. To disable auto-eviction for a cluster, set the Spark property spark.databricks.chauffeur.enableIdleContextTracking false.

Determine Spark and Databricks Runtime version

To determine the Spark version of the cluster your notebook is attached to, run:

spark.version

To determine the Databricks Runtime version of the cluster your notebook is attached to, run:

spark.conf.get("spark.databricks.clusterUsageTags.sparkVersion")

Note

Both this sparkVersion tag and the spark_version property required by the endpoints in the Clusters API and Jobs API refer to the Databricks Runtime version, not the Spark version.