Connect to Google Cloud Storage

Note

This article describes legacy patterns for configuring access to GCS. Databricks recommends using Unity Catalog to configure access to GCS and volumes for direct interaction with files. See Connect to cloud object storage using Unity Catalog.

This article describes how to configure a connection from Databricks to read and write tables and data stored on Google Cloud Storage (GCS).

Access GCS buckets using Google Cloud service accounts on clusters

You can access GCS buckets using Google Cloud service accounts on clusters. You must grant the service account permissions to read and write from the GCS bucket. Databricks recommends giving this service account the least privileges needed to perform its tasks. You can then associate that service account with a Databricks cluster.

You can connect to the bucket directly using the service account email address (recommended approach) or a key that you generate for the service account.

Important

The service account must be in the Google Cloud project that you used to set up the Databricks workspace.

The GCP user who creates the service account role must:

Be an GCP account user with the permissions to create service accounts and grant permissions roles for reading and writing to a GCS bucket.

The Databricks user who adds the service account to a cluster must have the Can manage permission on a cluster.

Step 1: Set up Google Cloud service account using Google Cloud Console

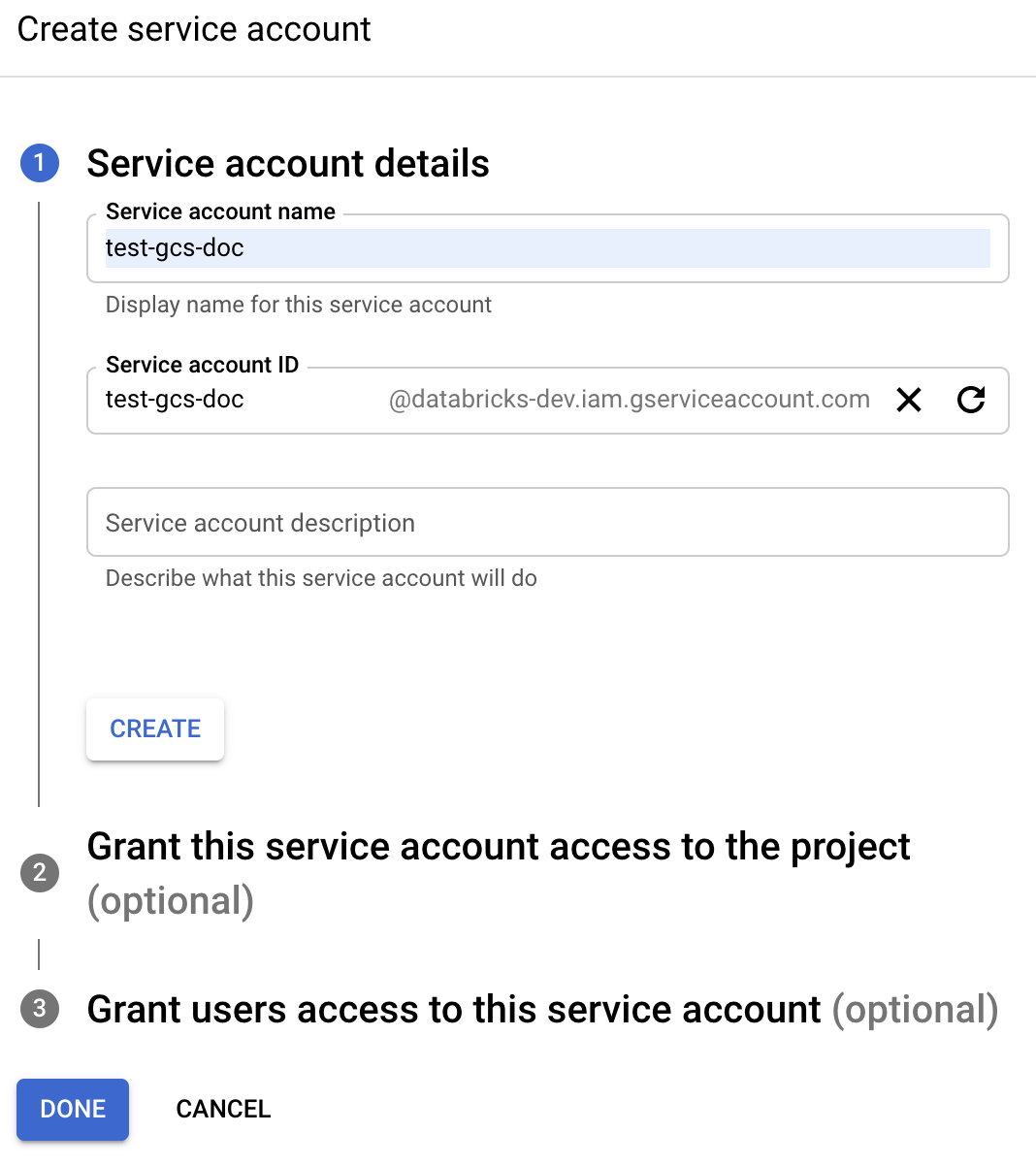

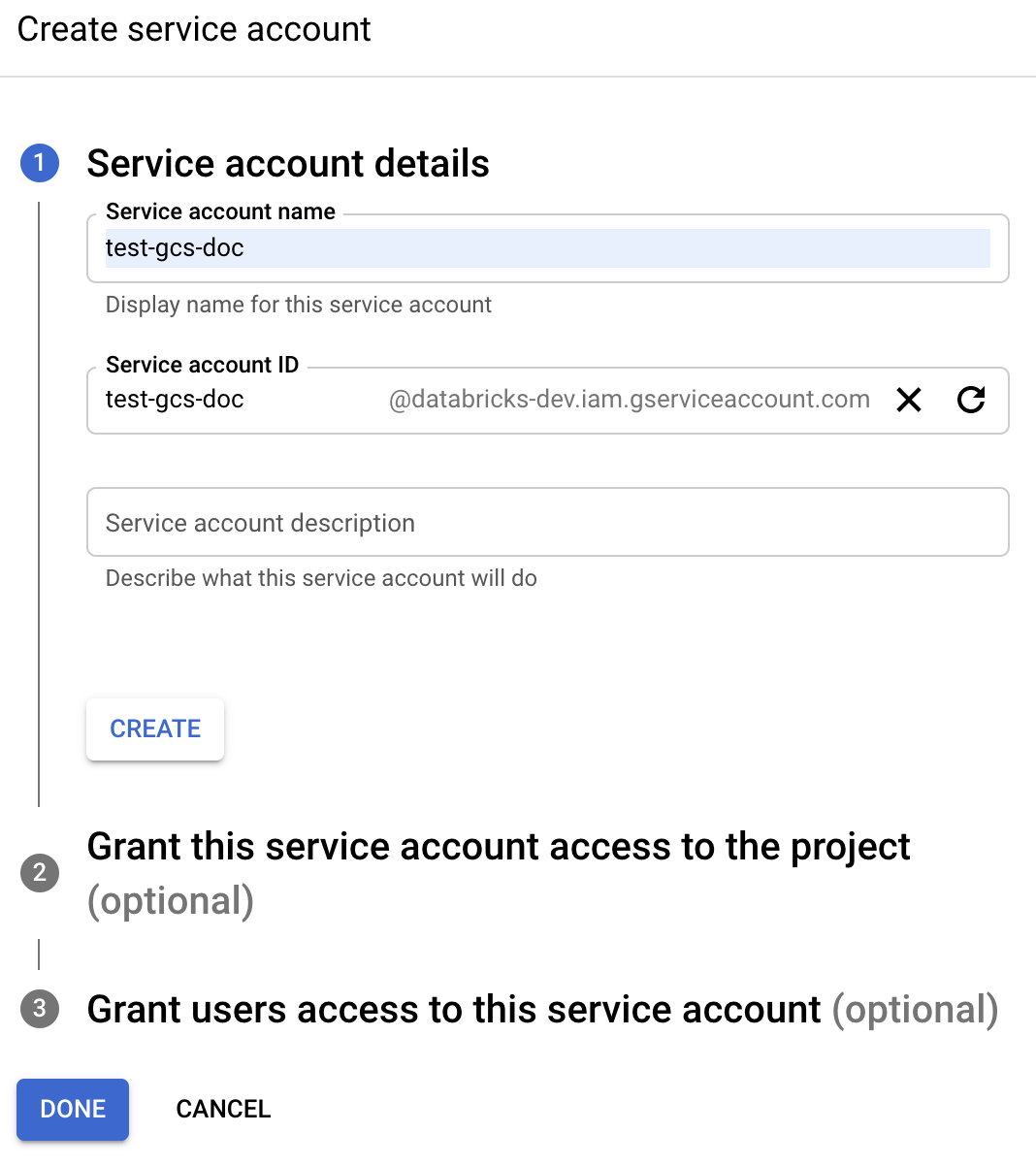

Click IAM and Admin in the left navigation pane.

Click Service Accounts.

Click + CREATE SERVICE ACCOUNT.

Enter the service account name and description.

Click CREATE.

Click CONTINUE.

Click DONE.

Navigate to the Google Cloud Console list of service accounts and select a service account.

Copy the associated email address. You will need it when you set up Databricks clusters.

Step 2: Configure your GCS bucket

Create a bucket

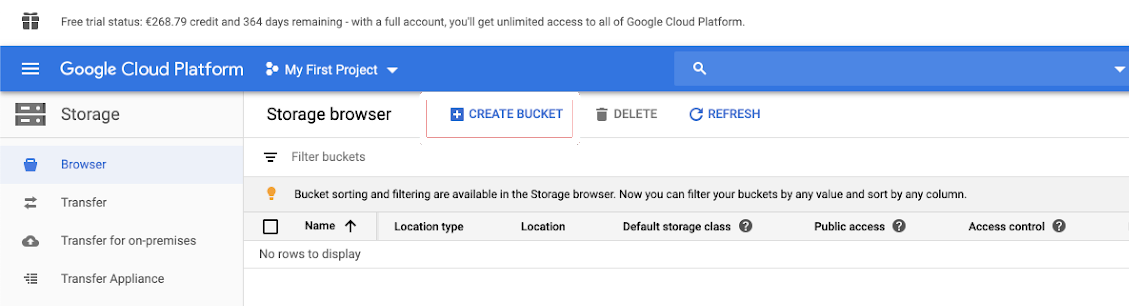

If you do not already have a bucket, create one:

Click Storage in the left navigation pane.

Click CREATE BUCKET.

Name your bucket. Pick a globally unique and permanent name that complies with Google’s naming requirements for GCS buckets.

Important

To work with DBFS mounts, your bucket name must not contain an underscore.

Click CREATE.

Configure the bucket

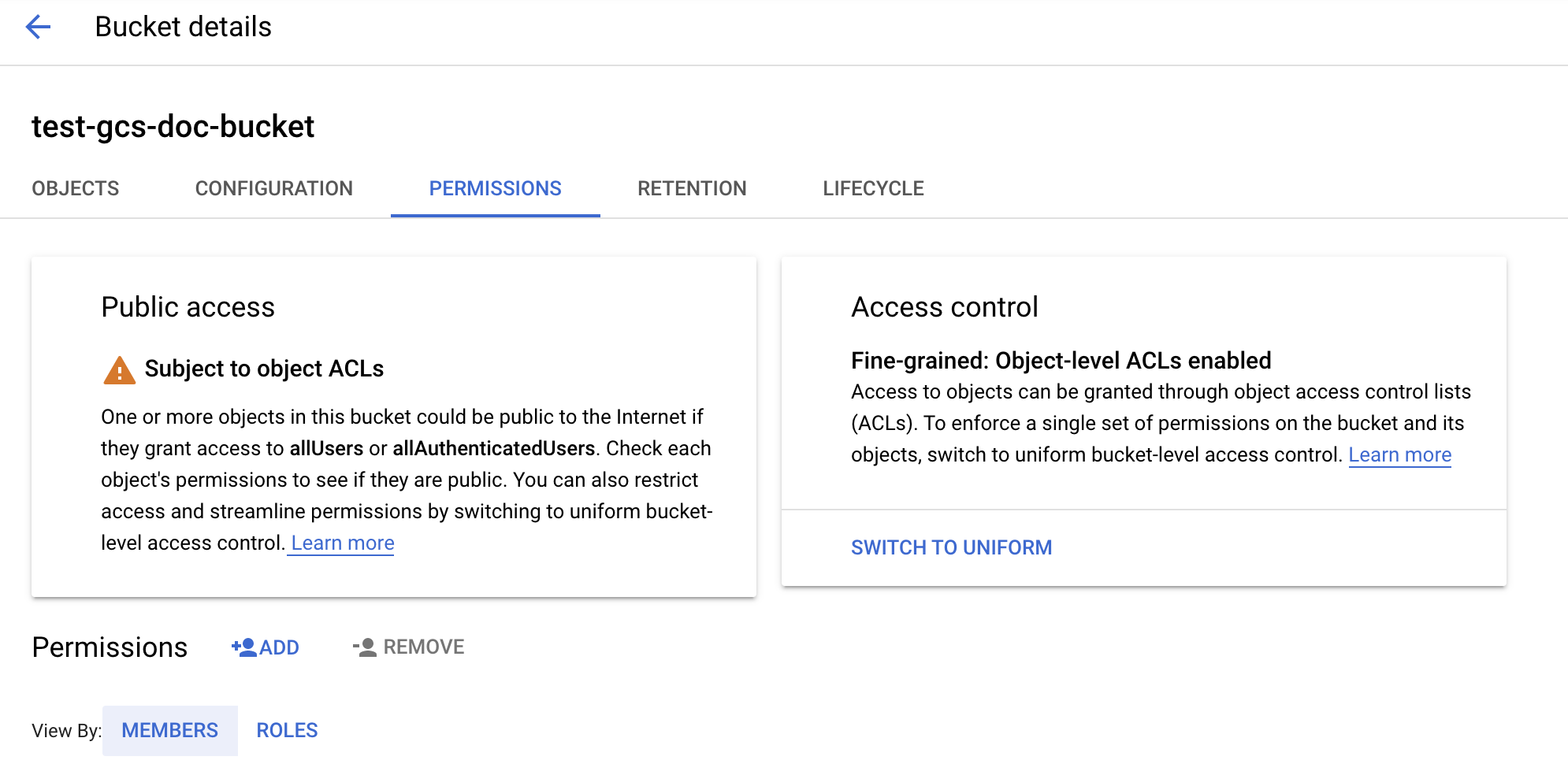

Configure the bucket:

Configure the bucket details.

Click the Permissions tab.

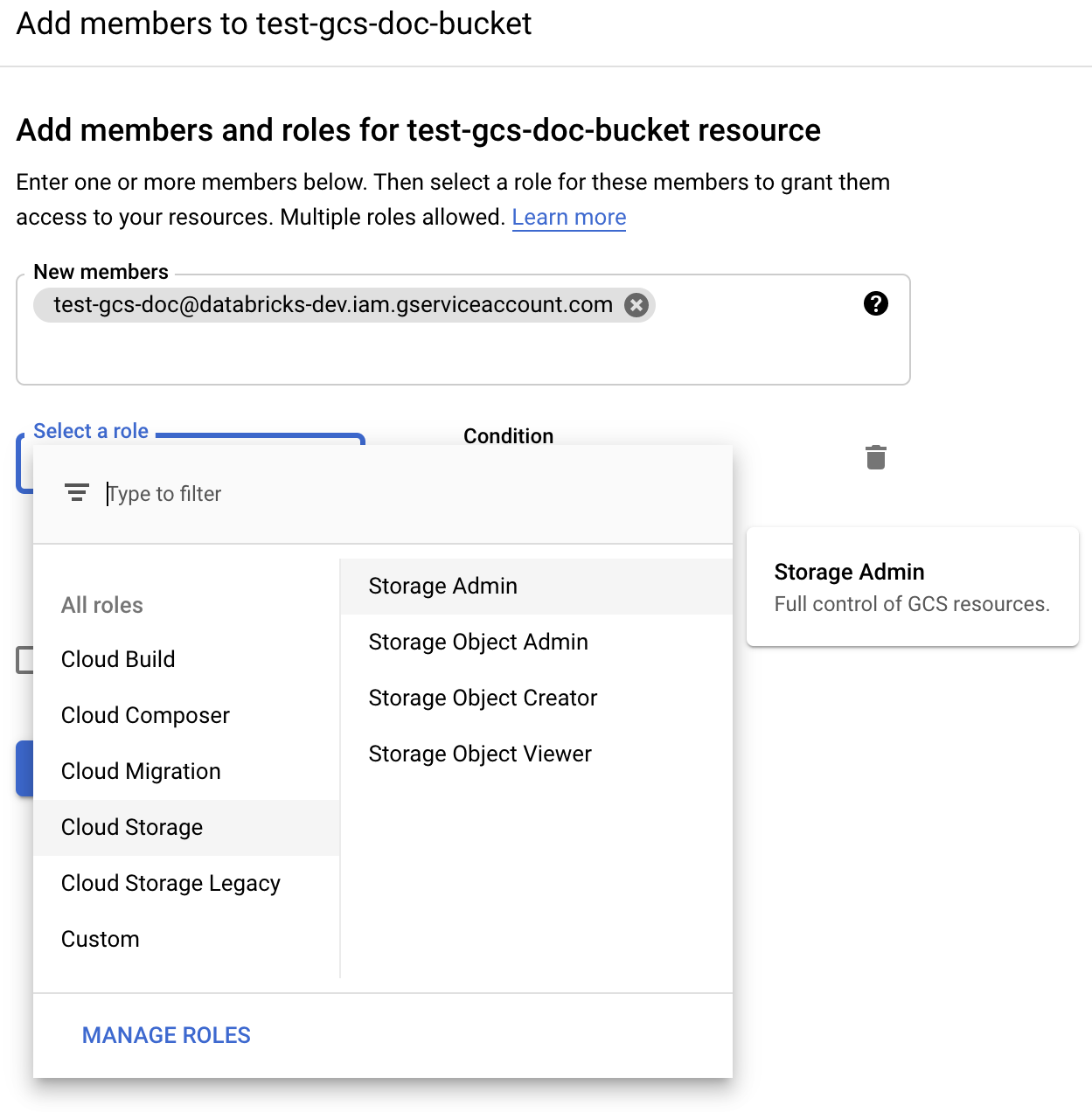

Next to the Permissions label, click ADD.

Provide the desired permission to the service account on the bucket from the Cloud Storage roles:

Storage Admin: Grants full privileges on this bucket.

Storage Object Viewer: Grants read and list permissions on objects in this bucket.

Click SAVE.

Step 3: Configure a Databricks cluster

When you configure your cluster, expand Advanced Options and set the Google Service Account field to your service account email address.

Use both cluster access control and notebook access control together to protect access to the service account and data in the GCS bucket. See Compute permissions and Collaborate using Databricks notebooks.

Access a GCS bucket directly with a Google Cloud service account key

To read and write directly to a bucket, you can either set the service account email address or configure a key defined in your Spark configuration.

Note

Databricks recommends using the service account email address because there are no keys involved, so there is no risk of leaking the keys. One reason to use a key is if the service account needs to be in a different Google Cloud project than the project that was used when creating the workspace. To use a service account email address, see Access GCS buckets using Google Cloud service accounts on clusters.

Step 1: Set up Google Cloud service account using Google Cloud Console

You must create a service account for the Databricks cluster. Databricks recommends giving this service account the least privileges needed to perform its tasks.

Click IAM and Admin in the left navigation pane.

Click Service Accounts.

Click + CREATE SERVICE ACCOUNT.

Enter the service account name and description.

Click CREATE.

Click CONTINUE.

Click DONE.

Step 2: Create a key to access GCS bucket directly

Warning

The JSON key you generate for the service account is a private key that should only be shared with authorized users as it controls access to datasets and resources in your Google Cloud account.

In the Google Cloud console, in the service accounts list, click the newly created account.

In the Keys section, click ADD KEY > Create new key.

Accept the JSON key type.

Click CREATE. The key file is downloaded to your computer.

Step 3: Configure the GCS bucket

Step 4: Put the service account key in Databricks secrets

Databricks recommends using secret scopes for storing all credentials. You can put the private key and private key ID from your key JSON file into Databricks secret scopes. You can grant users, service principals, and groups in your workspace access to read the secret scopes. This protects the service account key while allowing users to access GCS. To create a secret scope, see Manage secrets.

Step 5: Configure a Databricks cluster

In the Spark Config tab, configure either a global configuration or a per-bucket configuration. The following examples set the keys using values stored as Databricks secrets.

Note

Use cluster access control and notebook access control together to protect access to the service account and data in the GCS bucket. See Compute permissions and Collaborate using Databricks notebooks.

Global configuration

Use this configuration if the provided credentials should be used to access all buckets.

spark.hadoop.google.cloud.auth.service.account.enable true

spark.hadoop.fs.gs.auth.service.account.email <client-email>

spark.hadoop.fs.gs.project.id <project-id>

spark.hadoop.fs.gs.auth.service.account.private.key {{secrets/scope/gsa_private_key}}

spark.hadoop.fs.gs.auth.service.account.private.key.id {{secrets/scope/gsa_private_key_id}}

Replace <client-email>, <project-id> with the values of those exact field names from your key JSON file.

Per-bucket configuration

Use this configuration if the you must configure credentials for specific buckets. The syntax for per-bucket configuration appends the bucket name to the end of each configuration, as in the following example.

Important

Per-bucket configurations can be used in addition to global configurations. When specified, per-bucket configurations supercede global configurations.

spark.hadoop.google.cloud.auth.service.account.enable.<bucket-name> true

spark.hadoop.fs.gs.auth.service.account.email.<bucket-name> <client-email>

spark.hadoop.fs.gs.project.id.<bucket-name> <project-id>

spark.hadoop.fs.gs.auth.service.account.private.key.<bucket-name> {{secrets/scope/gsa_private_key}}

spark.hadoop.fs.gs.auth.service.account.private.key.id.<bucket-name> {{secrets/scope/gsa_private_key_id}}

Replace <client-email>, <project-id> with the values of those exact field names from your key JSON file.

Step 6: Read from GCS

To read from the GCS bucket, use a Spark read command in any supported format, for example:

df = spark.read.format("parquet").load("gs://<bucket-name>/<path>")

To write to the GCS bucket, use a Spark write command in any supported format, for example:

df.write.mode("<mode>").save("gs://<bucket-name>/<path>")

Replace <bucket-name> with the name of the bucket you created in Step 3: Configure the GCS bucket.